Computer

A computer is a machine that uses electronics to input, process, store, and output data. Data is information such as numbers, words, and lists. Input of data means to read information from a keyboard, a storage device like a hard drive, or a sensor. The computer processes or changes the data by following the instructions in software programs. A computer program is a list of instructions the computer has to perform. Programs usually perform mathematical calculations, modify data, or move it around. The data is then saved on a storage device, shown on a display, or sent to another computer. Computers can be connected together to form a network such as the internet, allowing the computers to communicate with each other.

The processor of a computer is made from integrated circuits (chips) that contains many transistors. Most computers are digital, which means that they represent information using binary digits, or bits. Computers come in different shapes and sizes, depending on the brand, model, and purpose. They range from small computers, such as smartphones and laptops, to large computers, such as supercomputers.

Characteristics

Two things that often define a computer are that it responds to a specific instruction set in a well-defined manner, and that it can execute a stored list of instructions called a program. There are four main actions in a computer: inputting, storing, outputting and processing.

Modern computers can do billions of calculations in a second. Being able to calculate many times per second allows modern computers to multi-task, which means they can do many different tasks at the same time. Computers do many different jobs where automation is useful. Some examples are controlling traffic lights, vehicles, security systems, washing machines and digital televisions.

Computers can be designed to do almost anything with certain information. Computers are used to control large and small machines that, in the past, were controlled by humans. Billions of people have a personal computer at home or at work. They are used for things such as calculation, listening to music, reading, writing, or playing games.

Hardware

Modern computers are electronic computer hardware. They do mathematical arithmetic very quickly, but computers do not really "think." They only follow the instructions in their software programs. The software uses the hardware when the user gives it instructions and produces useful outputs.

Controls

Computers are controlled with user interfaces. Input devices which include keyboards, computer mice, buttons, and touch screens, etc.computer are electronic computer hardware.

Programs

Computer programs are designed or written by computer programmers. A few programmers write programs in the computer's own language, called machine code. Most programs are written using a programming language like C, C++, JavaScript. These programming languages are more like the language with which one talks and writes every day. The compiler converts the user's instructions into binary code (machine code) that the computer will understand and do what is needed.

History of computers

First computer

In 1837, Charles Babbage proposed the first general mechanical computer, the Analytical Engine. The Analytical Engine contained an Arithmetic Logic Unit, basic flow control, punched cards, and integrated memory. It is the first general-purpose computer concept that could be used for many things and not only one particular program. However, this computer was never built while Charles Babbage was alive, because he didn't have enough money. In 1910, Henry Babbage, Charles Babbage's youngest son, was able to finish a part of this machine and do basic calculations.

Before the computer era there were machines that could do the same thing over and over again, like a music box. People began to want to be able to tell their machine to do different things. For example, they wanted to tell the music box to play different music every time. This part of computer history is called the "history of programmable machines", which in simple words means "the history of machines that I can order to do different things if I know how to speak their language."

One of the first examples of programmable machines was built by Hero of Alexandria (c. 10–70 AD). He built a mechanical theater which performed a play lasting 10 minutes and was operated by a complex system of ropes and drums. These ropes and drums were the language of the machine- they told what the machine did and when. Some people argue that this is the first programmable machine.[1]

Some people disagree on which early computer is programmable. Many say the "castle clock", an astronomical clock invented by Al-Jazari in 1206, is the first known programmable analog computer.[2][3] The length of day and night could be adjusted every day in order to account for the changing lengths of day and night throughout the year.[4] Some count this daily adjustment as computer programming.

Others say the first computer was made by Charles Babbage.[4] Ada Lovelace is considered to be the first programmer.[5][6][7]

The computing era

At the end of the Middle Ages, people started thinking math and engineering were more important. In 1623, Wilhelm Schickard made a mechanical calculator. Other Europeans made more calculators after him. They were not modern computers because they could only add, subtract, and multiply- you could not change what they did to make them do something like play Tetris. Because of this, we say they were not programmable. Now engineers use computers to design and plan.

In 1801, Joseph Marie Jacquard used punched paper cards to tell his textile loom what kind of pattern to weave. He could use punch cards to tell the loom what to do, and he could change the punch cards, which means he could program the loom to weave the pattern he wanted. This means the loom was programmable. At the end of the 1800s Herman Hollerith invented the recording of data on a medium that could then be read by a machine, developing punched card data processing technology for the 1890 U.S. census. His tabulating machines read and summarized data stored on punched cards and they began use for government and commercial data processing.

Charles Babbage wanted to make a similar machine that could calculate. He called it "The Analytical Engine".[8] Because Babbage did not have enough money and always changed his design when he had a better idea, he never built his Analytical Engine.

As time went on, computers were used more. People get bored easily doing the same thing over and over. Imagine spending your life writing things down on index cards, storing them, and then having to go find them again. The U.S. Census Bureau in 1890 had hundreds of people doing just that. It was expensive, and reports took a long time. Then an engineer worked out how to make machines do a lot of the work. Herman Hollerith invented a tabulating machine that would automatically add up information that the Census bureau collected. The Computing Tabulating Recording Corporation (which later became IBM) made his machines. They leased the machines instead of selling them. Makers of machines had long helped their users understand and repair them, and CTR's tech support was especially good.

Because of machines like this, new ways of talking to these machines were invented, and new types of machines were invented, and eventually the computer as we know it was born.

Analog and digital computers

In the first half of the 20th century, scientists started using computers, mostly because scientists had a lot of math to figure out and wanted to spend more of their time thinking about science questions instead of spending hours adding numbers together. For example, if they had to launch a rocket ship, they needed to do a lot of math to make sure the rocket worked right. So they put together computers. These analog computers used analog circuits, which made them very hard to program. In the 1930s, they invented digital computers, and soon made them easier to program. However this is not the case as many consecutive attempts have been made to bring arithmetic logic to l3.Analog computers are mechanical or electronic devices which solve problems.Some are used to control machines as well.

High-scale computers

Scientists figured out how to make and use digital computers in the 1930s to 1940s. Scientists made a lot of digital computers, and as they did, they figured out how to ask them the right sorts of questions to get the most out of them. Here are a few of the computers they built:

| Name | First operational | Numeral system | Computing mechanism | Programming | Turing complete |

|---|---|---|---|---|---|

| Zuse Z3 (Germany) | May 1941 | Binary | Electro-mechanical | Program-controlled by punched film stock | Yes (1998) |

| Atanasoff–Berry Computer (US) | mid-1941 | Binary | Electronic | Not programmable—single purpose | No |

| Colossus (UK) | January 1944 | Binary | Electronic | Program-controlled by patch cables and switches | No |

| Harvard Mark I – IBM ASCC (US) | 1944 | Decimal | Electro-mechanical | Program-controlled by 24-channel punched paper tape (but no conditional branch) | No |

| ENIAC (US) | November 1945 | Decimal | Electronic | Program-controlled by patch cables and switches | Yes |

| Manchester Small-Scale Experimental Machine (UK) | June 1948 | Binary | Electronic | Stored-program in Williams cathode ray tube memory | Yes |

| Modified ENIAC (US) | September 1948 | Decimal | Electronic | Program-controlled by patch cables and switches plus a primitive read-only stored programming mechanism using the Function Tables as program ROM | Yes |

| EDSAC (UK) | May 1949 | Binary | Electronic | Stored-program in mercury delay line memory | Yes |

| Manchester Mark 1 (UK) | October 1949 | Binary | Electronic | Stored-program in Williams cathode ray tube memory and magnetic drum memory | Yes |

| CSIRAC (Australia) | November 1949 | Binary | Electronic | Stored-program in mercury delay line memory | Yes |

- Konrad Zuse's electromechanical "Z machines". The Z3 (1941) was the first working machine that used binary arithmetic. Binary arithmetic means using "Yes" and "No." to add numbers together. You could also program it. In 1998 the Z3 was proved to be Turing complete. Turing complete means that it is possible to tell this particular computer anything that it is mathematically possible to tell a computer. It is the world's first modern computer.

- The non-programmable Atanasoff–Berry Computer (1941) which used vacuum tubes to store "yes" and "no" answers, and regenerative capacitor memory.

- The Harvard Mark I (1944), A big computer that you could kind of program.

- The U.S. Army's Ballistics Research Laboratory ENIAC (1946), which could add numbers the way people do (using the numbers 0 through 9) and is sometimes called the first general purpose electronic computer (since Konrad Zuse's Z3 of 1941 used electromagnets instead of electronics). At first, however, the only way to reprogram ENIAC was by rewiring it.

Several developers of ENIAC saw its problems. They invented a way to for a computer to remember what they had told it, and a way to change what it remembered. This is known as "stored program architecture" or von Neumann architecture. John von Neumann talked about this design in the paper First Draft of a Report on the EDVAC, distributed in 1945. A number of projects to develop computers based on the stored-program architecture started around this time. The first of these was completed in Great Britain. The first to be demonstrated working was the Manchester Small-Scale Experimental Machine (SSEM or "Baby"), while the EDSAC, completed a year after SSEM, was the first really useful computer that used the stored program design. Shortly afterwards, the machine originally described by von Neumann's paper—EDVAC—was completed but was not ready for two years.

Nearly all modern computers use the stored-program architecture. It has become the main concept which defines a modern computer. The technologies used to build computers have changed since the 1940s, but many current computers still use the von-Neumann architecture.

In the 1950s computers were built out of mostly vacuum tubes. Transistors replaced vacuum tubes in the 1960s because they were smaller and cheaper. They also need less power and do not break down as much as vacuum tubes. In the 1970s, technologies were based on integrated circuits. Microprocessors, such as the Intel 4004 made computers smaller, cheaper, faster and more reliable. By the 1980s, microcontrollers became small and cheap enough to replace mechanical controls in things like washing machines. The 1980s also saw home computers and personal computers. With the evolution of the Internet, personal computers are becoming as common as the television and the telephone in the household.

In 2005 Nokia started to call some of its mobile phones (the N-series) "multimedia computers" and after the launch of the Apple iPhone in 2007, many are now starting to add the smartphone category among "real" computers. In 2008, if smartphones are included in the numbers of computers in the world, the biggest computer maker by units sold, was no longer Hewlett-Packard, but rather Nokia.[9]

Kinds of computers

There are many types of computers. Some include:

A "desktop computer" is a small machine that has a screen (which is not part of the computer). Most people keep them on top of a desk, which is why they are called "desktop computers." "Laptop computers" are computers small enough to fit on your lap. This makes them easy to carry around. Both laptops and desktops are called personal computers, because one person at a time uses them for things like playing music, surfing the web, or playing video games.

There are larger computers that can be used by multiple people at the same time. These are called "mainframes," and these computers do all the things that make things like the internet work. You can think of a personal computer like this: the personal computer is like your skin: you can see it, other people can see it, and through your skin you feel wind, water, air, and the rest of the world. A mainframe is more like your internal organs: you never see them, and you barely even think about them, but if they suddenly went missing, you would have some very big problems.

An embedded computer, also called an embedded system is a computer that does one thing and one thing only, and usually does it very well. For example, an alarm clock is an embedded computer. It tells the time. Unlike your personal computer, you cannot use your clock to play Tetris. Because of this, we say that embedded computers cannot be programmed because you cannot install more programs on your clock. Some mobile phones, automatic teller machines, microwave ovens, CD players and cars are operated by embedded computers.

All-in-one PC

All-in-one computers are desktop computers that have all of the computer's inner mechanisms in the same case as the monitor. Apple has made several popular examples of all-in-one computers, such as the original Macintosh of the mid-1980s and the iMac of the late 1990s and 2000s.

Uses of computers

At home

- Playing computer games

- Writing

- Solving math problems

- Watching videos

- Listening to music and audio

- Audio, Video and photo editing

- Creating sound or video

- Communicating with other people

- Using The Internet

- Online shopping

- Drawing

- Online bill payments

- Online business

At work

- Word processing

- Spreadsheets

- Presentations

- Photo Editing

- Video editing/rendering/encoding

- Audio recording

- System Management

- Website Development

- Software Development

Working methods

Computers store data and the instructions as numbers, because computers can do things with numbers very quickly. These data are stored as binary symbols (1s and 0s). A 1 or a 0 symbol stored by a computer is called a bit, which comes from the words binary digit. Computers can use many bits together to represent instructions and the data that these instructions use. A list of instructions is called a program and is stored on the computer's hard disk. Computers work through the program by using a central processing unit, and they use fast memory called RAM (also known as Random Access Memory) as a space to store the instructions and data while they are doing this. When the computer wants to store the results of the program for later, it uses the hard disk because things stored on a hard disk can still be remembered after the computer is turned off.

An operating system tells the computer how to understand what jobs it has to do, how to do these jobs, and how to tell people the results. Millions of computers may be using the same operating system, while each computer can have its own application programs to do what its user needs. Using the same operating systems makes it easy to learn how to use computers for new things. A user who needs to use a computer for something different, can learn how to use a new application program. Some operating systems can have simple command lines or a fully user-friendly GUI.

The Internet

One of the most important jobs that computers do for people is helping with communication. Communication is how people share information. Computers have helped people move forward in science, medicine, business, and learning, because they let experts from anywhere in the world work with each other and share information. They also let other people communicate with each other, do their jobs almost anywhere, learn about almost anything, or share their opinions with each other. The Internet is the thing that lets people communicate between their computers. The Internet also allows the computer user to play an Online game.

Computers and waste

A computer is now almost always an electronic device. It usually contains materials that will become electronic waste when discarded. When a new computer is bought in some places, laws require that the cost of its waste management must also be paid for. This is called product stewardship.

Computers can become obsolete quickly, depending on what programs the user runs. Very often, they are thrown away within two or three years, because some newer programs require a more powerful computer. This makes the problem worse, so computer recycling happens a lot. Many projects try to send working computers to developing nations so they can be re-used and will not become waste as quickly, as most people do not need to run new programs. Some computer parts, such as hard drives, can break easily. When these parts end up in the landfill, they can put poisonous chemicals like lead into the ground-water. Hard drives can also contain secret information like credit card numbers. If the hard drive is not erased before being thrown away, an identity thief can get the information from the hard drive, even if the drive doesn't work, and use it, for example, to steal money from the previous owner's bank account.

Main hardware

Computers come in different forms, but most of them have a common design.

- All computers have a CPU.

- All computers have some kind of data bus which lets them get inputs or output things to the environment.

- All computers have some form of memory. These are usually chips (integrated circuits) which can hold information.

- Many computers have some kind of sensors, which lets them get input from their environment.

- Many computers have some kind of display device, which lets them show output. They may also have other peripheral devices connected.

A computer has several main parts. When comparing a computer to a human body, the CPU is like a brain. It does most of the thinking and tells the rest of the computer how to work. The CPU is on the Motherboard, which is like the skeleton. It provides the basis for where the other parts go, and carries the nerves that connect them to each other and the CPU. The motherboard is connected to a power supply, which provides electricity to the entire computer. The various drives (CD drive, floppy drive, and on many newer computers, USB flash drive) act like eyes, ears, and fingers, and allow the computer to read different types of storage, in the same way that a human can read different types of books. The hard drive is like a human's memory, and keeps track of all the data stored on the computer. Most computers have a sound card or another method of making sound, which is like vocal cords, or a voice box. Connected to the sound card are speakers, which are like a mouth, and are where the sound comes out. Computers might also have a graphics card, which helps the computer to create visual effects, such as 3D environments, or more realistic colors, and more powerful graphics cards can make more realistic or more advanced images, in the same way a well trained artist can.

Largest computer companies

| Company name | Sales (US $ billion) |

|---|---|

| 220,000 | |

| 212,680 | |

| 132,070 | |

| 112,300 | |

| 99,750 | |

| 87,510 | |

| 86,830 | |

| 74,450 | |

| 72,340 | |

| 70,830 | |

| 59,820 | |

| 56,940 | |

| 56,200 | |

| 54,750 | |

| 52,700 |

Computer Media

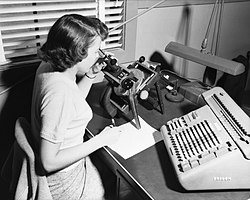

A human computer, with microscope and calculator, 1952

- Os d'Ishango IRSNB.JPG

The Ishango bone, a bone tool dating back to prehistoric Africa

- Antikythera Fragment A (Front).webp

The Antikythera mechanism, dating back to ancient Greece circa 200–80 BCE, is an early analog computing device.

- Sliderule 2005.png

Slide rule, model Aristo 868 Studio, showing the calculation 1.3 x 2 = 2.6. Transparent background

- Charles Babbage - 1860.jpg

Charles Babbage

- Aritmómetro Electromecánico.jpg

Electro-mechanical calculator (1920) by Leonardo Torres Quevedo.

- 099-tpm3-sk.jpg

Sir William Thomson's third tide-predicting machine design, 1879–81

- Z3 Deutsches Museum.JPG

Replica of Konrad Zuse's Z3, the first fully automatic, digital (electromechanical) computer

Konrad Zuse, inventor of the modern computer

Video demonstrating the standard components of a "slimline" computer

References

- ↑ "Heron of Alexandria". Archived from the original on 2013-12-27. Retrieved 2008-01-15.

- ↑ Turner, Howard R. (1997). Science in Medieval Islam: An Illustrated Introduction. University of Texas Press. p. 184. ISBN 978-0-292-78149-8.

- ↑ Donald Routledge Hill, "Mechanical Engineering in the Medieval Near East", Scientific American, May 1991, pp. 64-9 (compare Donald Routledge Hill, Mechanical Engineering Archived 2007-12-25 at the Wayback Machine)

- ↑ 4.0 4.1 Ancient Discoveries, Episode 11: Ancient Robots, History Channel, archived from the original on 2014-03-01, retrieved 2008-09-06

- ↑ Fuegi & Francis 2003, pp. 16–26

- ↑ Lua error in Module:Citation/CS1/Identifiers at line 630: attempt to index field 'known_free_doi_registrants_t' (a nil value).

- ↑ "Ada Lovelace honoured by Google doodle", The Guardian, Dec 10, 2012, archived from the original on 25 December 2018, retrieved 10 December 2012

- ↑ Don't confuse the Analytical Engine with Babbage's difference engine which was a non-programmable mechanical calculator.

- ↑ Miller, Matthew. "Nokia was the world's largest computer maker in 2008". ZDNet. Archived from the original on 2020-09-23. Retrieved 2020-07-18.